50 Humanitarian IM Tips

Tips to organising and managing data in humanitarian response

Created by Simon Johnson / @simon_b_johnson

Press right on your keyboard or swipe right to navigate

Click below to jump to a section

This guide is intended as a quick read for anyone who has or is going to be working in information management in a humanitarian context.

Many of the tips given will hopefully be obvious, but do address repeated mistakes encountered in this sector.

A temporary office after Typhoon Haiyan

Well managed and accurate data are key to making informed decision within the humanitarian sector. Information management can help to shed light on foreign or rapidly changing environments providing context to decision makers. Good and well managed information flows can make organisations more responsive and efficient leading to increased effectiveness

The tips here should not be considered hard and fast rules, but rather general guidance. The context of use can sometime require different and unique approaches.

Each page will provide a tip and a brief description. If you want to find out more about a particular tip then press down on your keyboard or swipe. Here you can find more information and links to resources providing further reading and learning materials.

Text here will indicate when you can press down

Pages when you slide down will contain extra information and learning materials about the tip.

The Basics

Use a spreadsheet for numerical data

There have been many examples in past humanitarian responses of numerical data being collected in a word processing document when they would be more suited to a spreadsheet.

This means simple aggregation, operations and analysis cannot be completed without first importing into a spreadsheet. Word tables may also be formatted in a way that does not copy well to spreadsheet meaning extra work before it can be used for analysis.

Press down for more

Microsoft Excel is the most commonly used, but there are free to use alternatives with slightly less functionality.

Google Spreadsheets: Webpage

Open Office: Webpage

Connect with the community of Information Managers working in the area

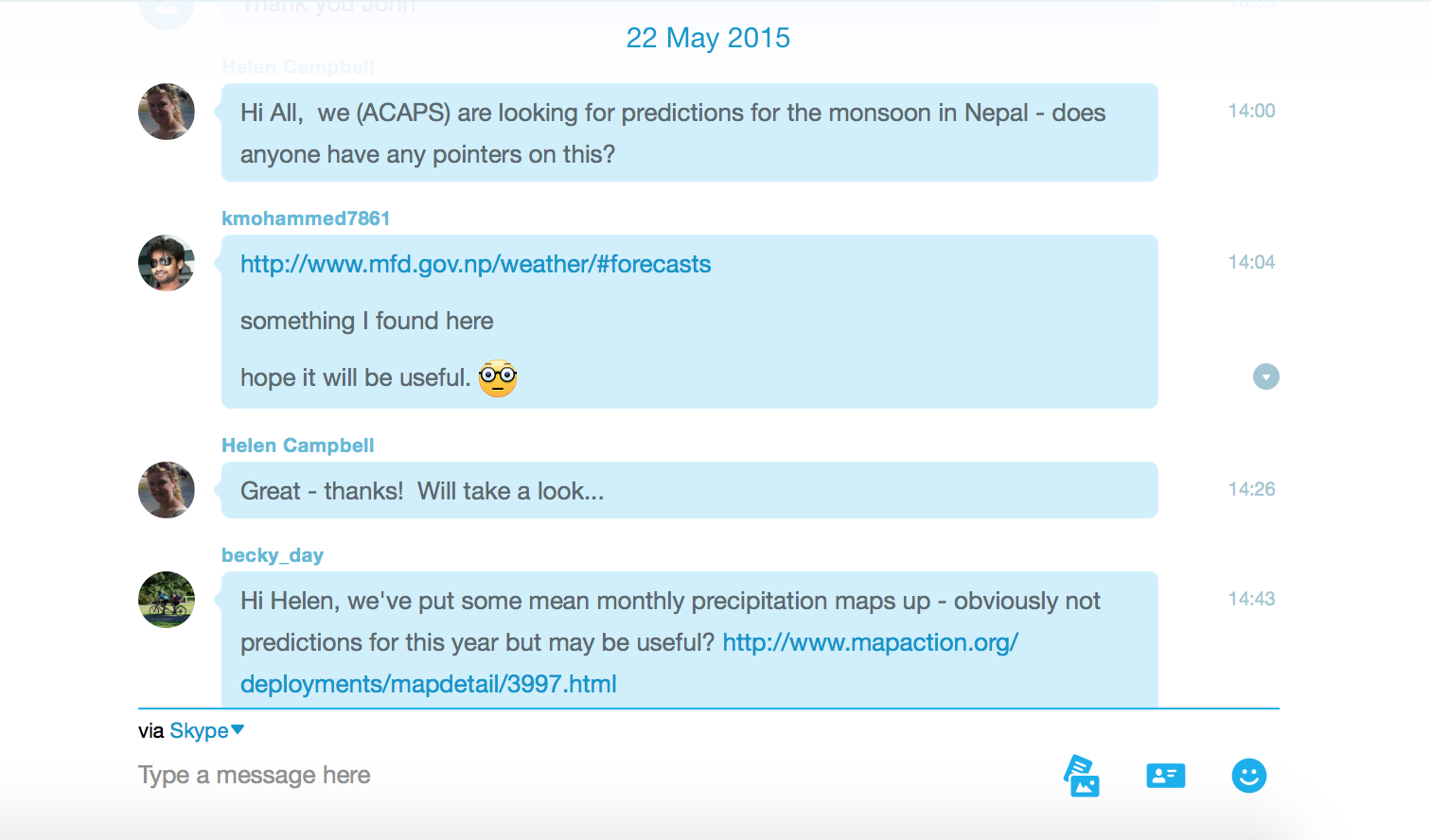

In most instances where humanitarian work is taking place there will be other organisations working on similar projects. By attending local meetings and joining relevant Skype groups you can make contact with other information managers. By sharing knowledge and collaborating on solving problems work can be completed quickly and efficiently.

Save Often

This is a lesson quickly learnt by most through practical experience. Computers can be temperamental things and it can be soul destroying watching your computer crash and losing an hours worth of work. Save the anguish and save often!

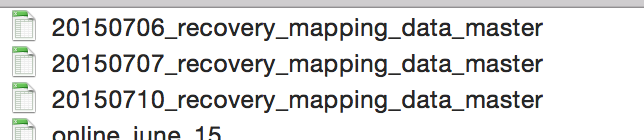

Save Multiple Versions

You might regret that big change you made to your work last week. It's best to save multiple versions as you progress so that you can easily revert any changes you have made.

Use sensible file names

There are numerous attributes that you could include in a file name. The most important aspect is to make sure it is understandable to yourself, but also to others who might use your file without the full context. Some suggested components:

- Date - YYYYMMDD

- Description

- Your Initials

- Version Number

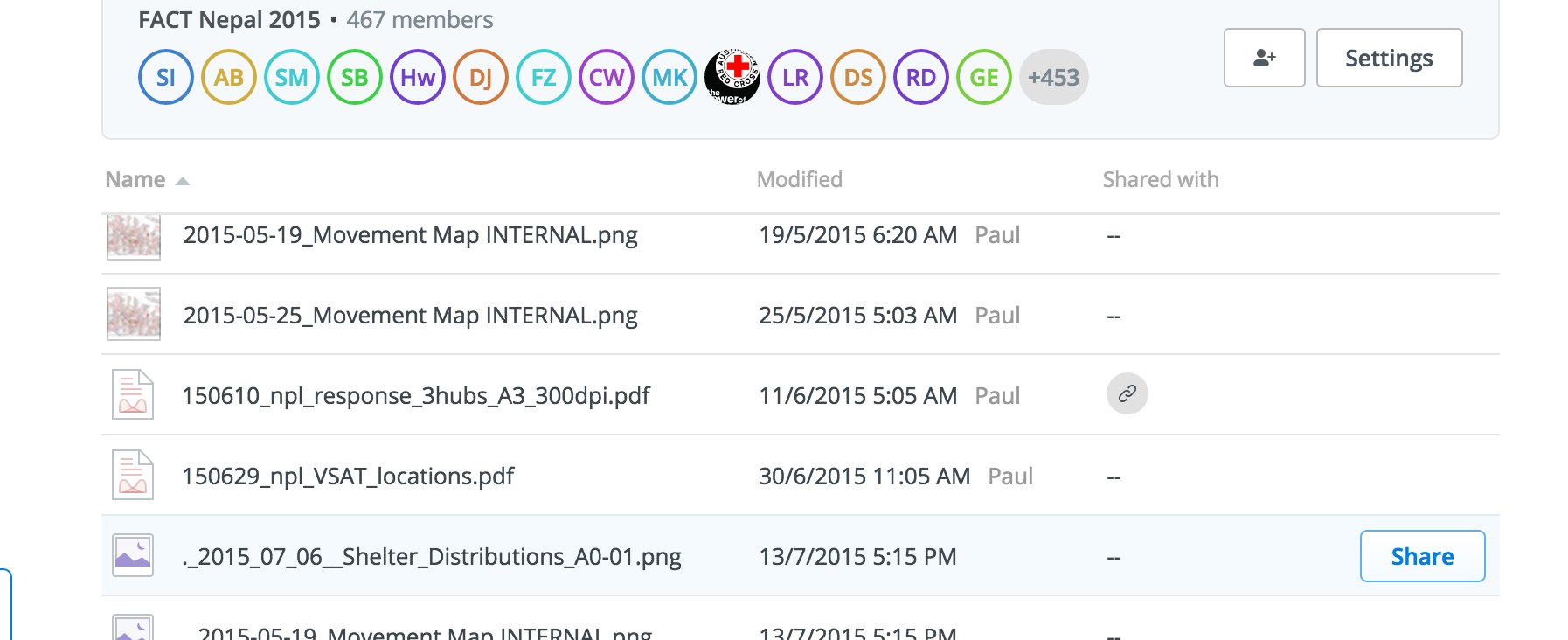

Back up your data

Data although saved can sometimes be lost. This could be due to a virus, hard disk, user error or tens of other reasons.

With the prevalence of cloud services to save files to, work can be saved online for free.

Dropbox used in the IFRC Nepal 2015 earthquake response

Press down for more

Collecting

Check whether data already exists

It is worth reaching out to the information management community and the government in the area to check that the information has not already been collected by someone else. There is no need to duplicate work that has already been done.

Know how your data will answer your questions

There have been many assessments carried out where most of the data collected was never used. By deciding how you will use your data before you start, you can reduce the amount of redundant questions and make sure the right ones are asked.

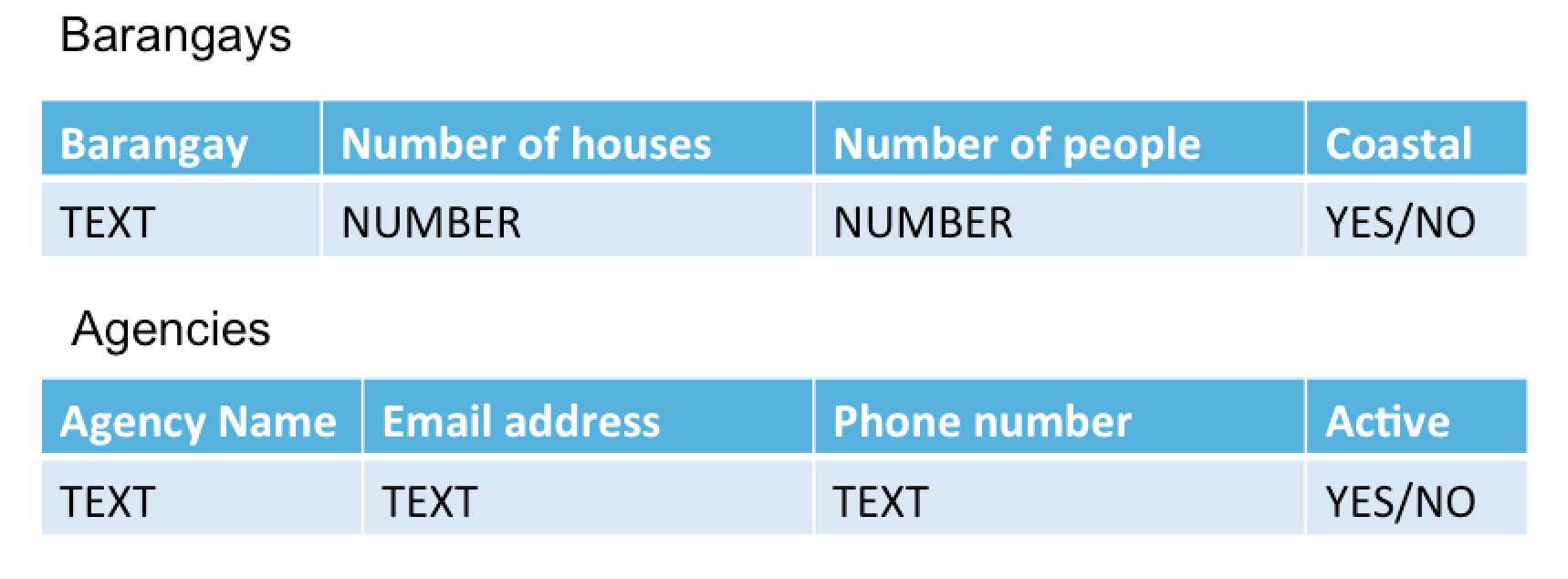

Design your data template before you collect it

By designing your data template before collecting data it can give guidance to designing surveys and collecting data from multiple people. If you want to know the damage in each area instead of asking each person an open ended question, providing a template ensures that the data will be collected consistently.

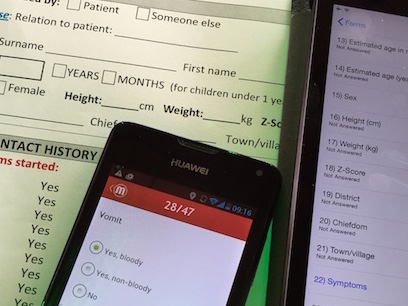

Use Mobile Data collection

Instead of doing paper based surveys data collection can be completed using mobile devices including phones and tablets. This allows for data validation on entry ensuring the data collected is consistent in nature.

Press down for more

There are a number of tools out there that can help with mobile data collection, some are free and some are paid for.

Open Data Kit - A free to use mobile data collection application

MagPi - A commerical mobile data collection application

Consistent variable naming

If you are carry out more than one round of surveys make sure that the variable names used in the data are consistent with the previous surveys. Otherwise it can be very difficult for the person analysing the data to quickly match the questions together.

Use secondary data reviews

The data you want may already exist only not in a clean single source. Consider whether it is possible to pull this data together through reviewing and collating other data sources.

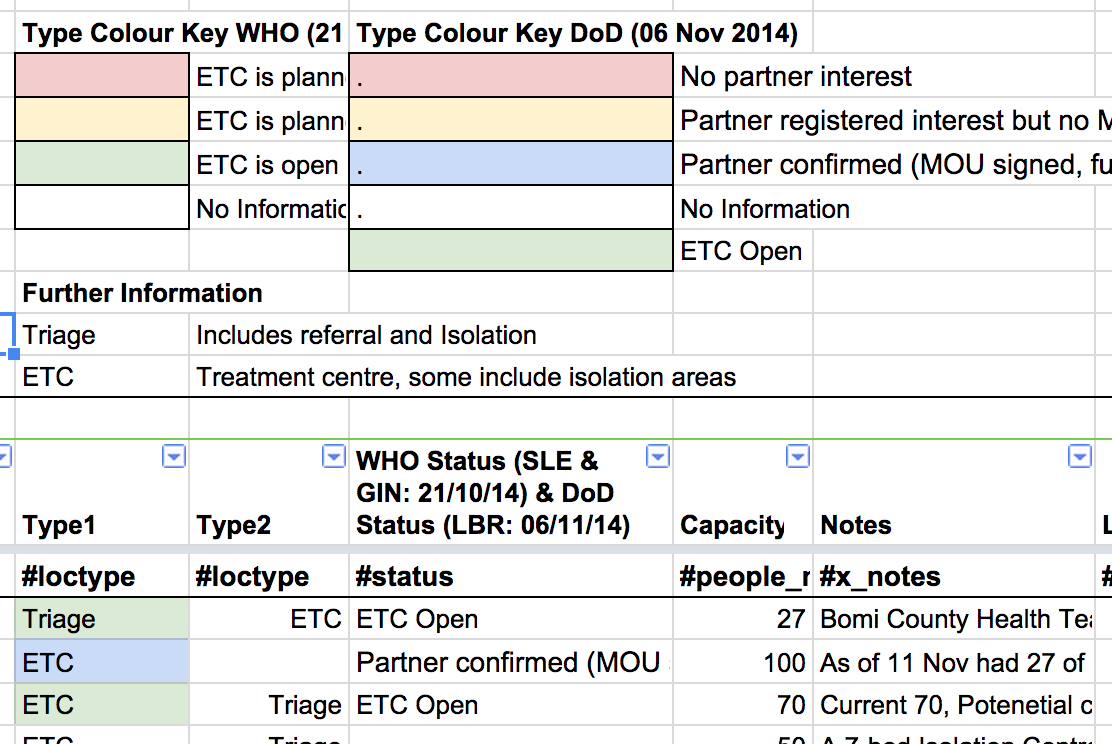

An example of this is the Red Cross creating a data set of Ebola Treatment centres from different news articles that mentioned them to create a single consolidated data set which did not already exist.

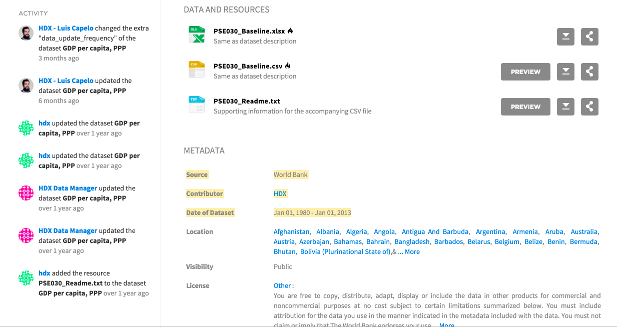

Check meta data of data

If you are using or referring to other data make sure to check the meta data on how, when, where and by who the data was collected. This will give an indication as to how reliable the data is and in which ways the data can be used.

Understand the difference between quantative data and qualative data.

Qualitative data refers to stories which are intentionally gathered and systematically sampled, shared, debriefed, and analyzed. It will help you understand the context, produce insights and think about which decisions have to be made.

Quantitative data refers to information that can be measured and written down with numbers, standardised and normalised. It will enable you to use metrics to make sound decisions.

Spreadsheets

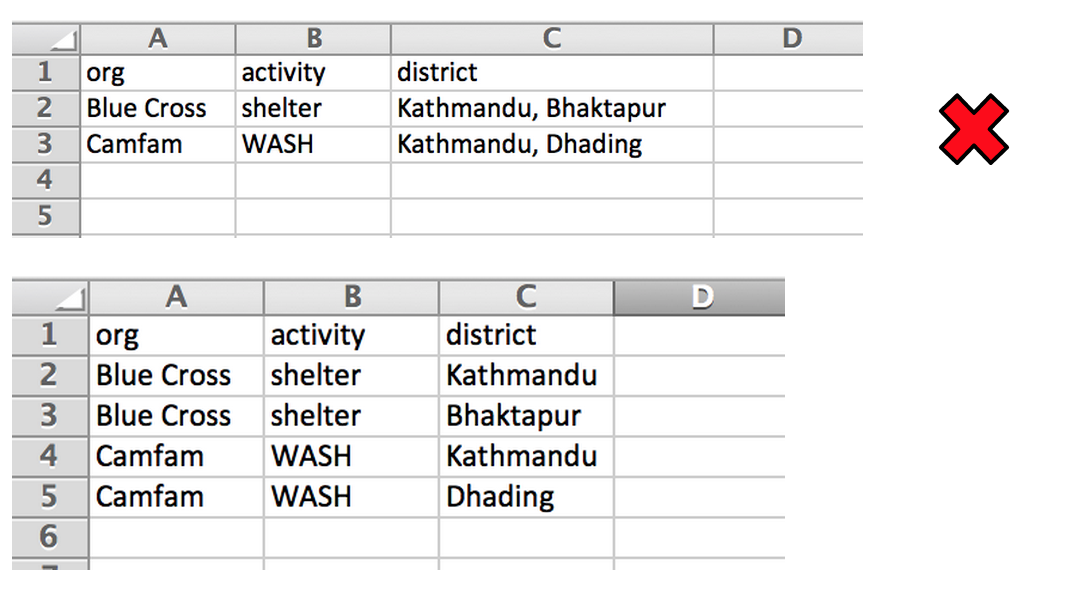

Only one piece of information per cell

Having more than one piece of data in a single spreadsheet cell prevents the ability to filter, sum or process a component of the list in any way. One way to resolve this is to duplicate the line for each component of the list.

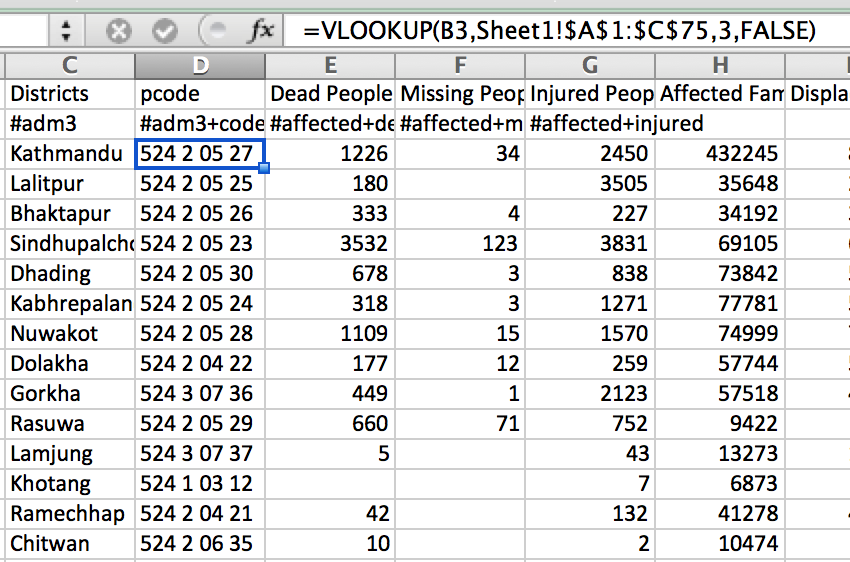

Learn VLookups

Often you will need to look up other data based on a value in your data set. An example is you might have the names of the districts you are working in, but also want the official codes for these. A vlookup can pull through these official codes automatically.

Press down for more

Free learning material for learning vlookups

Or learn index(match())

Using a combination of index function and the match function can create a more powerful look up.

Press down for more

Free learning material for learning index(match)

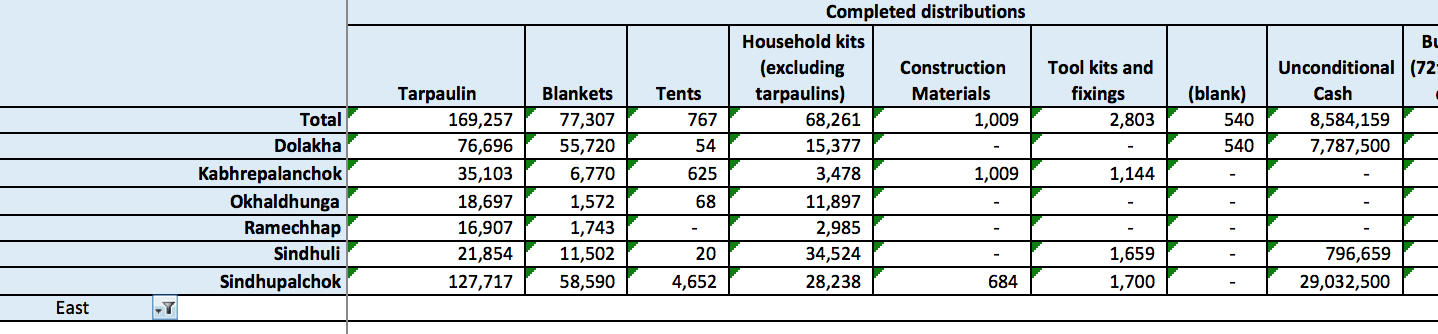

Learn Pivot Tables

Pivot tables are a hugely powerful tool in spreadsheets. They are used for summarising, analysing and quickly manipulating data sets.

Press down for more

Free learning material for pivot tables

Record data at a granular level and use pivot tables to aggregate up and create reports

Many times people organise their data in the form it will be reported in. However by aggregating up it restricts possible ways the data can be organised in the future if a new need appears. It is always better to record data in the most basic possible format.

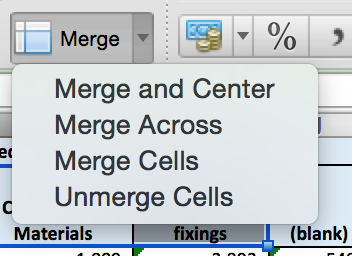

Do not merge cells in a spreadsheet

This can break some functionality in spreadsheets such as pivot tables and filters. An alternative method is to repeat the cell value or use colour to indicate the range.

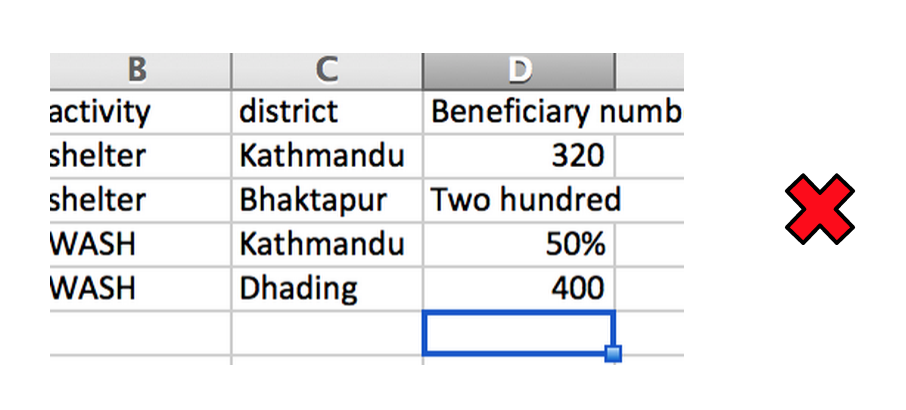

Keep data types consistent in columns

Try to make sure that columns do not contain mixed data types such as numbers and words.

By making sure all the data types are the same it means that data operations (e.g. sum) can be carried out over the columns.

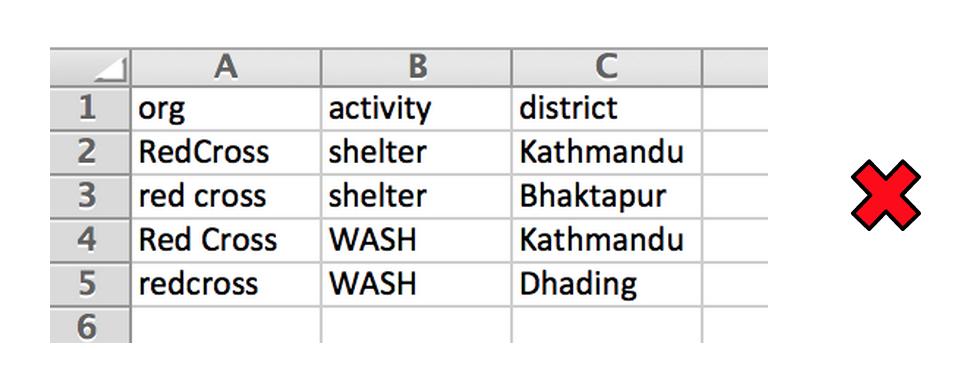

Keep data names consistent

Consistent data naming means that when a spreadsheet is visualised or summarised that all the correct instances of the data are picked up. Below we have the same organisation spelt different ways and a simple filter may not pick them all up.

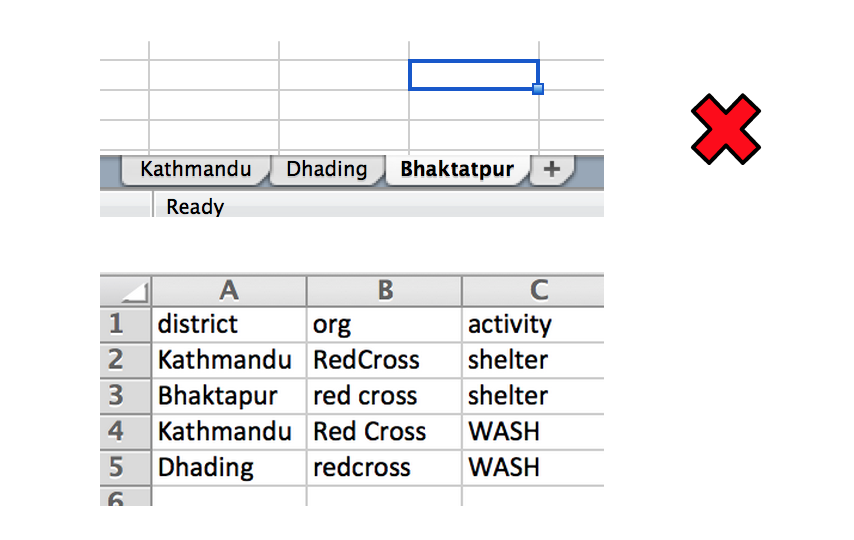

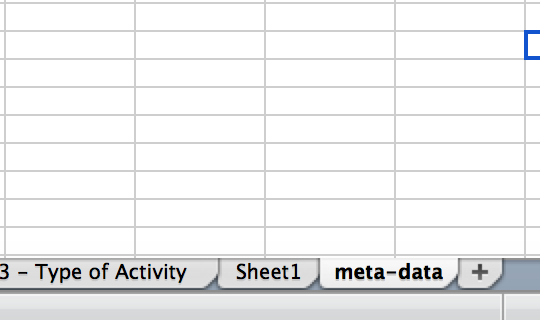

Keep all similar data in one sheet or data set

By placing data in separate sheets it hinders the process of aggregation. Below is one spreadsheet with one tab for each district. It could have been put into one table with a column for district as in column A in the second image. If a report is needed for one district a pivot table can be used to generate this.

Do not use colour to represent data

When a machine reads the data or it is copied to another sheet there is a chance that the formating is lost along with the data it represents. Create an extra column for the data attribute. Colour could still be used to highlight data.

Data Managing

Spot check your data

A spot check is a quick process where you pick 5 to 10 random lines of your data and check them thoroughly. It is especially important to do so with derived data.

It helps to check that your process is working consistently through the whole data set.

Check maximums, minimums and sums

When reviewing your data always carry out checks on the extreme. If you have someone over 200 years old in your data you can probably safely assume that something needs correcting in the process.

Sense Check

Does your data make sense? If you have more people affected than the population of the area, something has probably gone wrong in the process.

Check for duplicates

To quickly find and remove duplicates from your data make use of the sorting function on multiple columns. This will order duplicated rows next to each other.

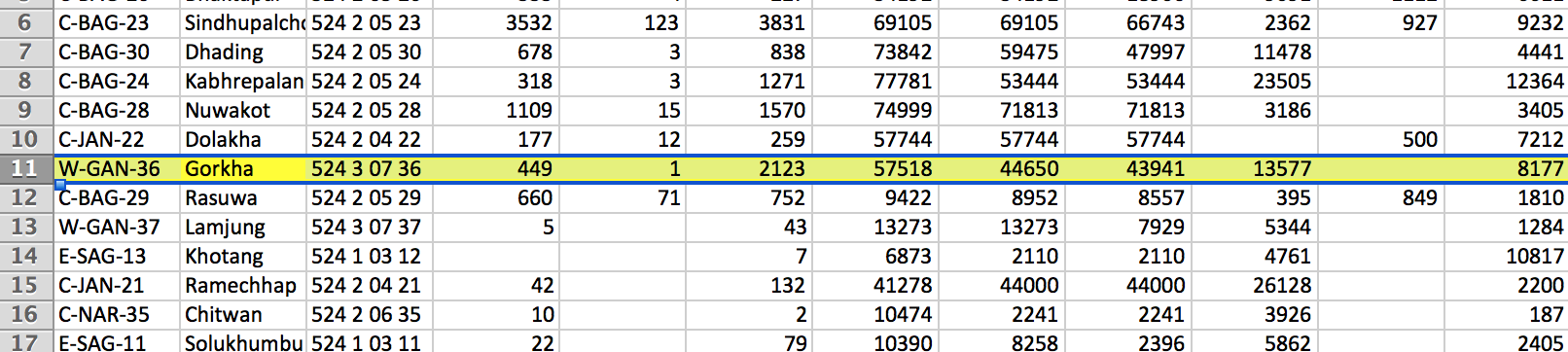

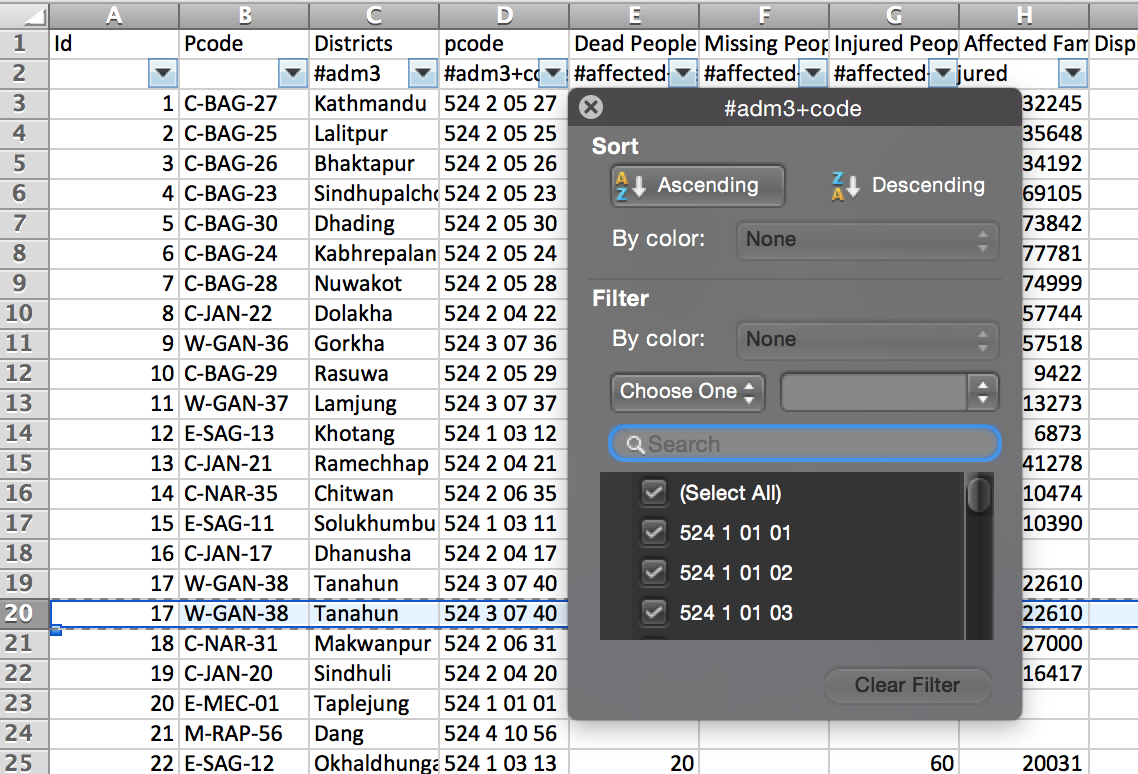

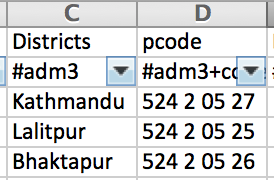

Use official codes to represent regions and districts where possible

In many countries where humanitarians operations are being carried out there will be official codes from administration areas. As place names can be spelt in many ways especially where foreign characters are used it can be hard to compare and join datasets without these codes. Many hours of time can be saved by using them.

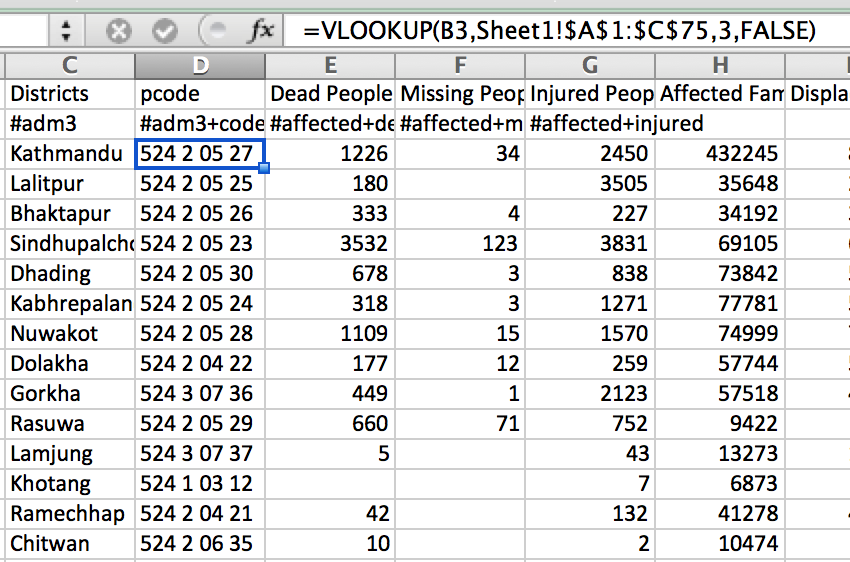

Check relationships

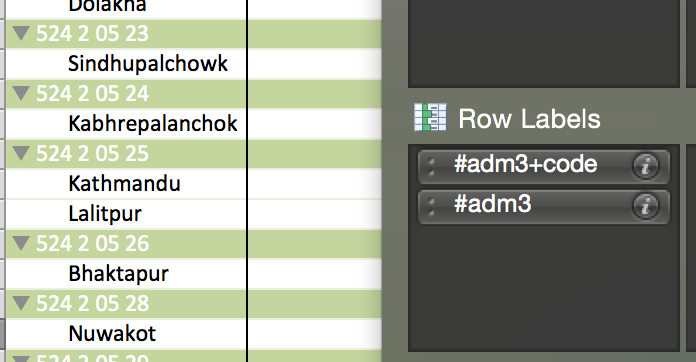

If you expect certain relationships between data such as an administration code only belongs to one place this can also be checked with pivot tables. By dropping both fields into the row columns it shows all the matches between the two data columns. Here we can see 524 2 05 25 has been used twice by accident.

Get coordinates for point data

If you have data that represents a point such as a hospital or a household instead of just naming it or giving its address you could also collect coordinates for it. There are many possible ways of doing this.

Press down for more

A GPS device can be used to get coordinate points. Most smartphones now contain the technology to do this. Mobile data collection applications can also help with the process.

Addresses can be geocoded to get coordinates. However in many developing countries the addresses are not well documented or are non-existent. It's better to record the coordinate data at the same time as data collection.

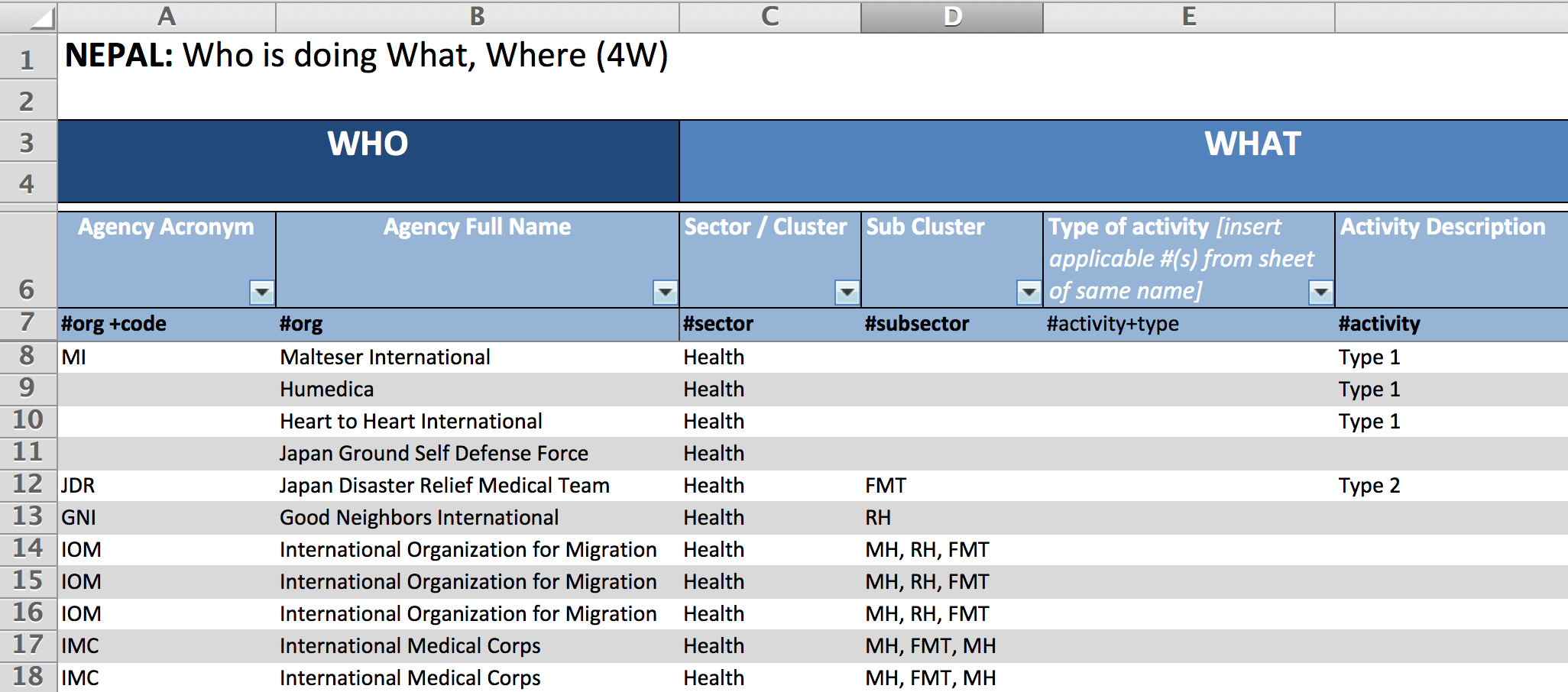

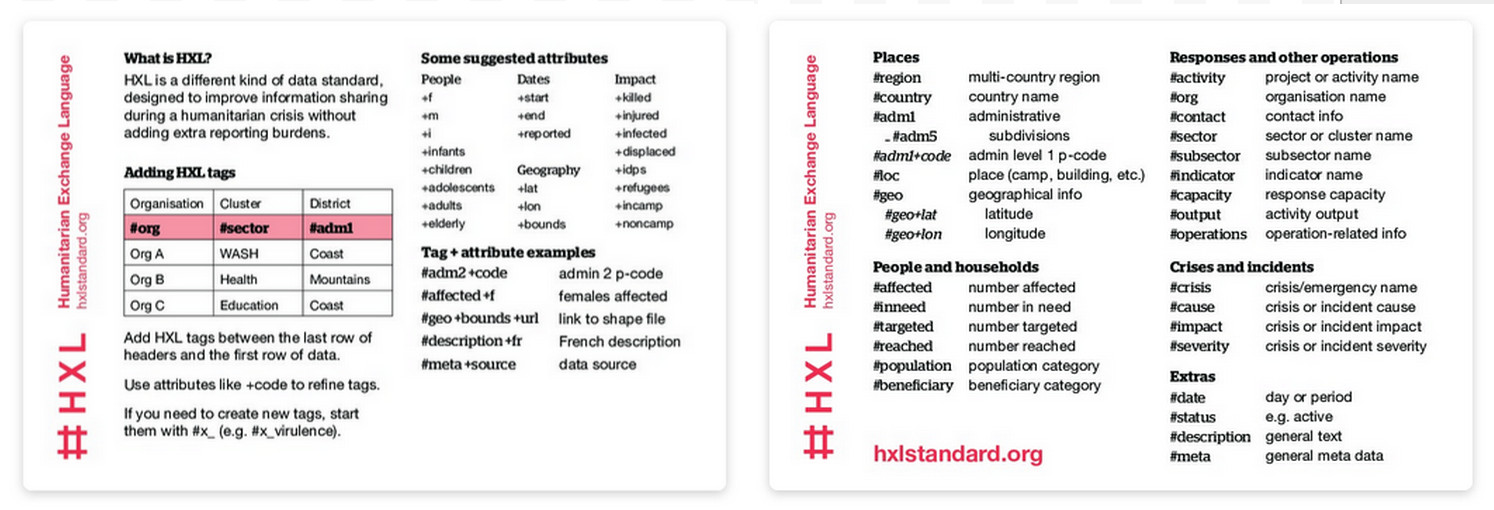

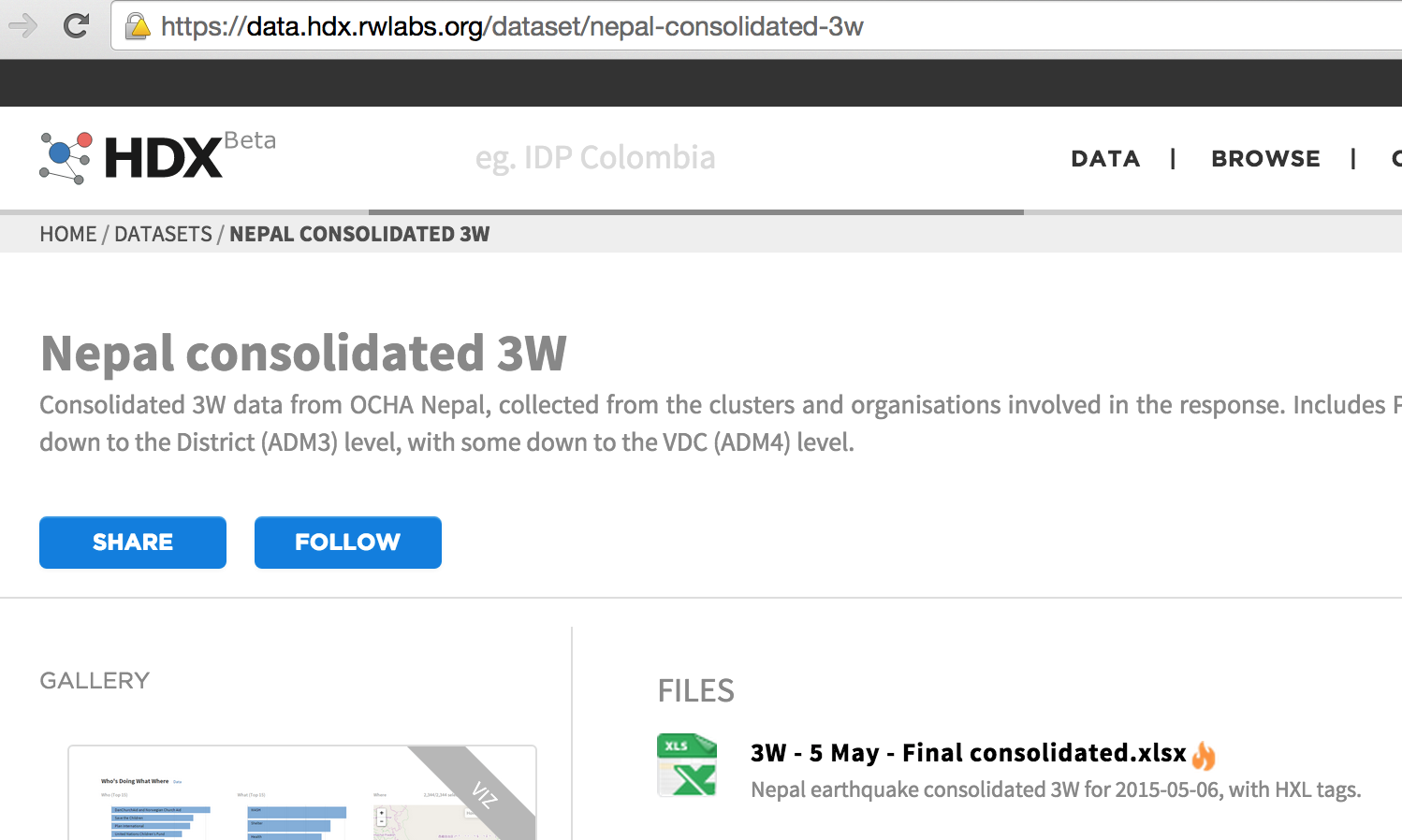

HXLate your data

HXL is a different kind of data standard, designed to improve information sharing during a humanitarian crisis without adding extra reporting burdens. By adding HXL to your data you help to make that data more understandable and easier to process in the future.

3W data with HXL tags (on 7th row) used in the Nepal 2015 Earthquake Response

Press down for more

HXL website - Go here to learn how to use HXL: http://hxlstandard.org/

HXL Postcard

Visualising

Remove Visual Noise

By removing unnecessary visual elements it allows for easier intepretation of the data.

Alignment

Misaligning elements can make a product look distracting and hard for the user to follow. By aligning elements to a grid system you make the product more appealing and easier to comprehend.

Use standard Iconography

When producing reports, maps or dashboards using standard iconography can help the viewer quickly interpret the themes and meanings from familar pictures.

Press down for more

Be aware of colour connotations

Colours have different meaning attached to them. If you colour areas on a map red, some people will assume that it may indicate an area of danger when this may not be your intent. It is worth being aware that different colours may have different meanings in various cultures.

Choose readable colour schemes

Be careful when choosing colours, especially on maps, to make sure everyone can read them. Your product may be printed in black and white or read by someone with colour blindness. Choosing a simple colour scale with varying lightness can solve this problem.

An example of a difficult to read colour scheme

Press down for more

There are good tools out there for helping to pick a good colour scheme.

Color Brewer is a particular good tool which has options for selecting schemes that work on black and white printers and for people with colour blindness

QGIS Preview Mode can show your map in grayscale or simulate colour blindness modes (View -> Preview mode ->)

Make Maps

If your data has a geographical element it may be better to represent it as a map rather than a data table. There are a few free and paid software and services that can do this.

Press down for more

Make Maps

QGIS is open source software used for creating maps.

QGIS tutorials is a website that contains a lot of learning material for making maps with QGIS.

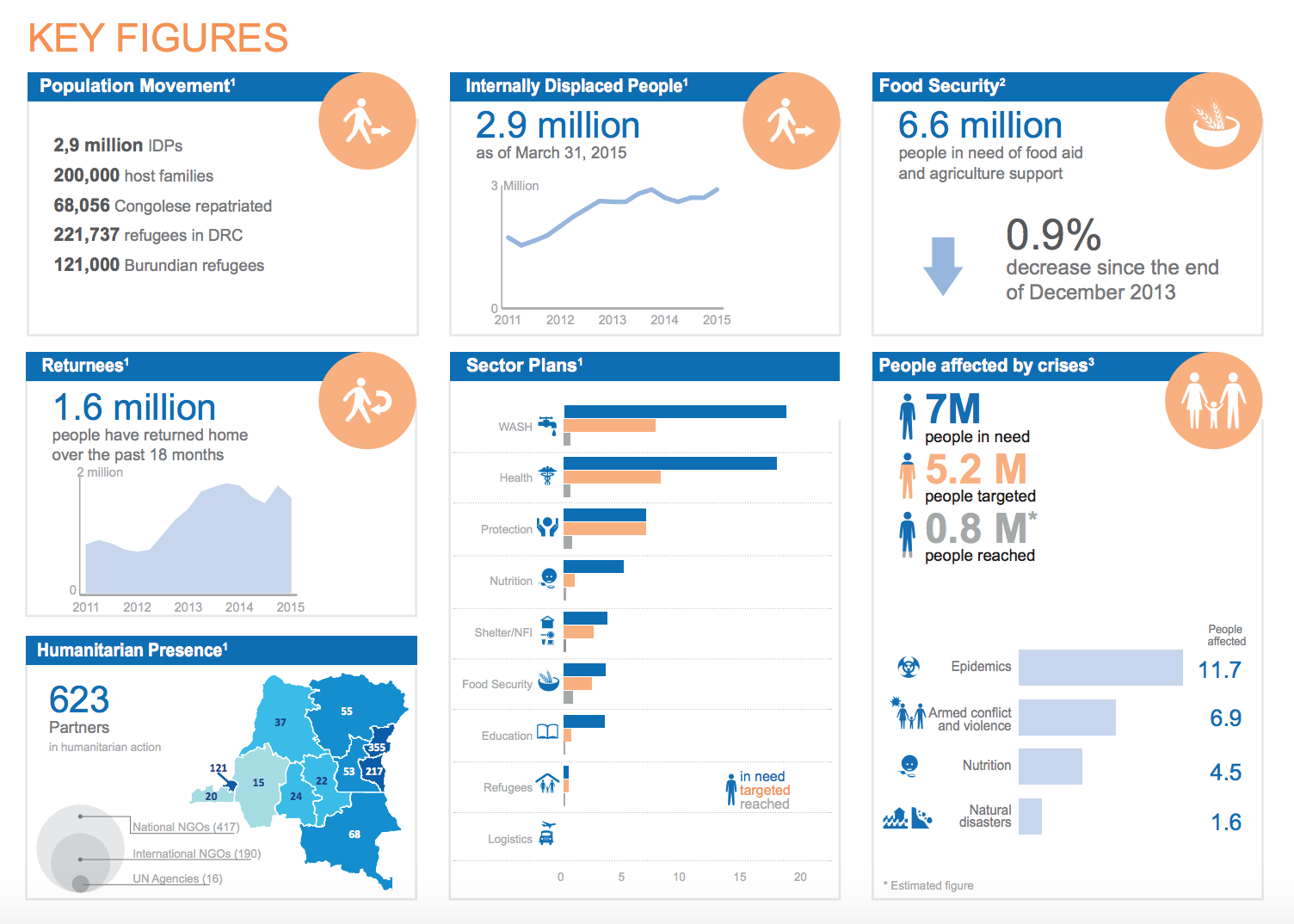

Make Infographics and Dashboards

Your pivot table report may tell everything a manager or the public needs to know, however engagement of it will be low. By making infographics and dashboards it will help to get the key figures read.

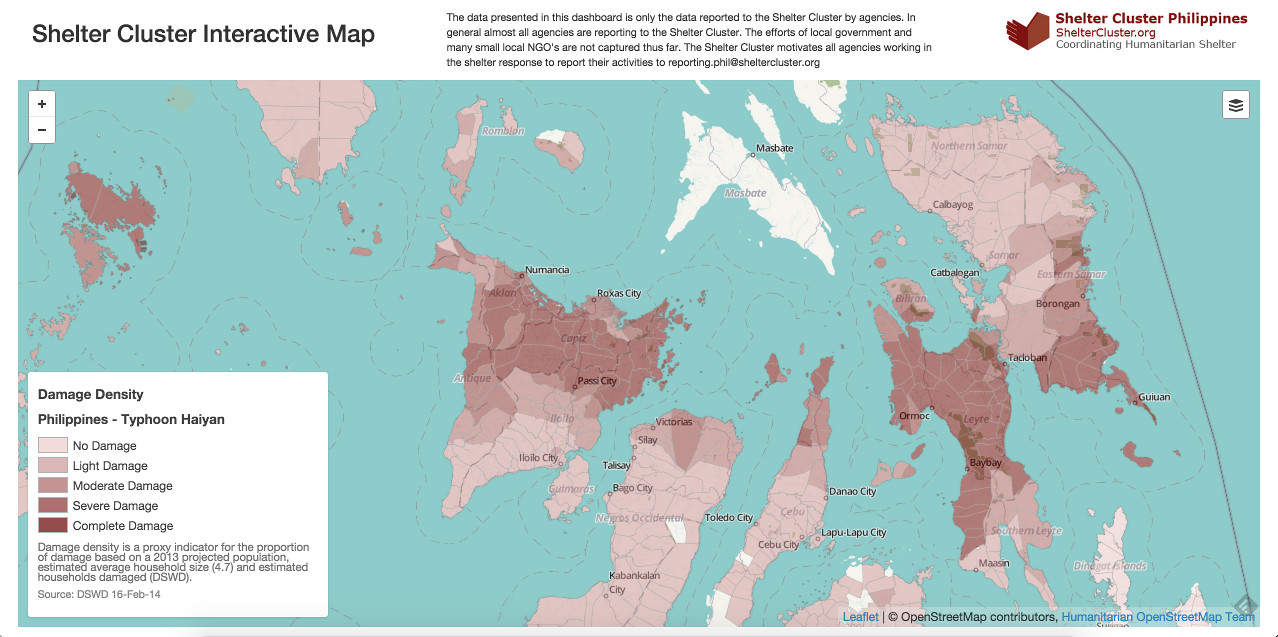

Make Web Maps

Web maps can allow the user to interact with the map by zooming, panning, clicking and turning layers on and off. This extra control means more data can be given to the user and they can customise what they are seeing for their own analysis.

For those who do not know how to code there are services out there that provide web maps. CartoDB is a popular service to get web maps of your data up and running quickly.

For the more adventurous it is possible to code your own web maps with JavaScript. Leaflet is a good library to start with.

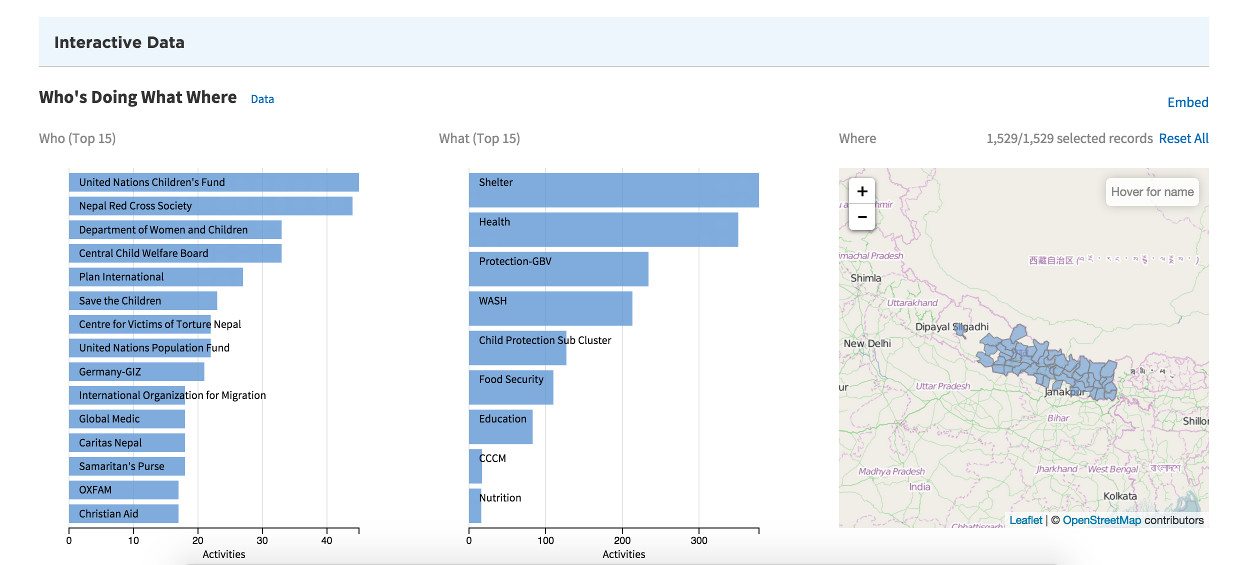

Make Interactive Dashboards

Interactive dashboards are harder to make, but can allow a user to drill down, slice and really analyse a data set.

A popular paid software for doing this is Tableau. They also have a free version for personal use to try.

If you are familiar with coding there are some great javascript libraries to make online dashboards with. It is worth have a read around d3.js, crossfilter.js and dc.js.

Sharing

Share your data in a spreadsheet not a PDF

If you want to share your data for others to use then a spreadsheet is better than a table in a PDF. Data tables in PDFs do not always copy well to a spreadsheet and hours can be wasted getting the data into a usable format. Consider also sharing the data behind maps and reports for other people to make use of.

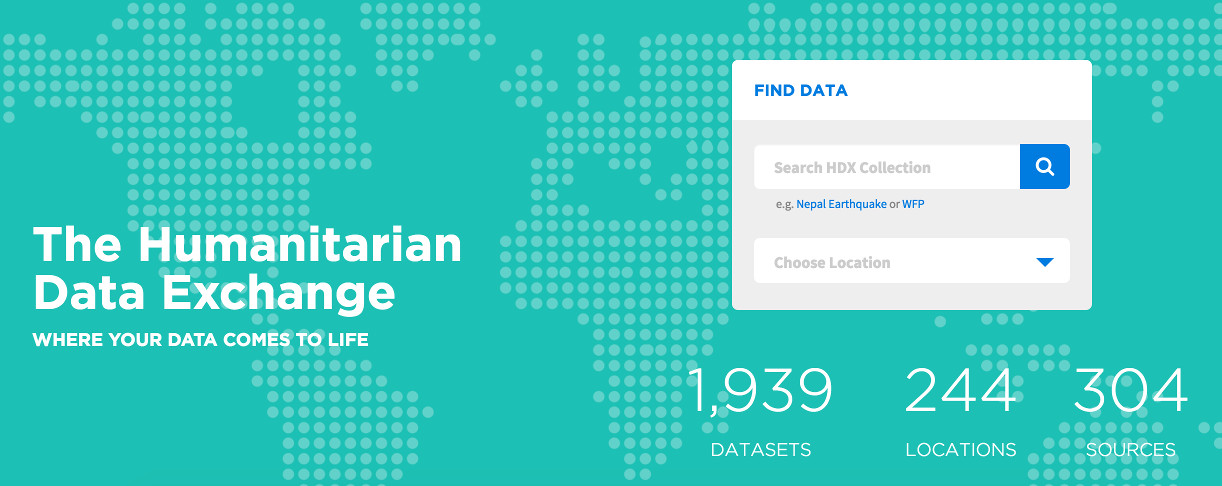

Share open data on HDX

The Humanitarian Data Exchange is a data sharing platform for humanitarian data. By sharing data we allow more people to make informed data driven decisions.

Share reports and dashboards on Relief Web

By sharing your products on relief web others in the humanitarian community can find them and use them.

Use consistent web addresses for updates

A user may bookmark or directly link to your data set from another location. If you do not use the same web address for updated information this will be missed.

Embed meta-data in the document

Data often gets shared in ad hoc ways through emails and online services. If the meta data is not in the data document then the two can get separated.

Soft skills

Verbal Communication

While information management products can communicate the data and a message, an information manager will often find themselves in meetings and presentations. To be able to verbally communicate the data and to simplify complex ideas is a powerful tool.

Listen

Listen and be patient while understanding the needs of the users of your IM products. Find out their contraints and resources. Summarise what you think they want and get them to agree. Don't build what you think they need, make what they actually need. Follow the steps on the listening wheel below to help.

Distill the questions

The question the user may originally pose or product that the user may ask for might not always be what they actually want. Sometimes people make their own intepretation of solving a problem. By discussing the problem and ideas with them better solutions may be found.

Don't make assumptions about the user

When talking to users or producing products for the user make no assumptions about what they already know. Listen to their constraints and knowledge.

Take breaks and get good sleep

Humanitarian Response can be stressful and exhausting work. Give yourself regular breaks and make sure you get enough sleep!

Get Involved

Get Involved

This is an open source project so anybody can add tips and resources to make it more comprehensive.

Contact Simon Johnson for more details.

This project is hosted on Github.

Thanks To...

Andrew Braye

Heidi El Hosaini

Robert Banick

Jean Mège

Jagoda Pietrzak

Shruti Grover